Elon Musk, along with several other influential figures in the tech industry, have encouraged artificial intelligence labs to cease developing AI systems more sophisticated than GPT-4, the most recent large language model by OpenAI. In an open letter endorsed by Musk and Apple’s co-founder Steve Wozniak, these technology pioneers have called for a six-month suspension of advanced AI development, citing potential dangers to society. As one of OpenAI’s co-founders, Musk has repeatedly expressed concerns that the organization is straying from its initial mission.

Numerous technology leaders have joined Elon Musk in requesting AI labs to halt the progression of systems capable of rivaling human intellect.

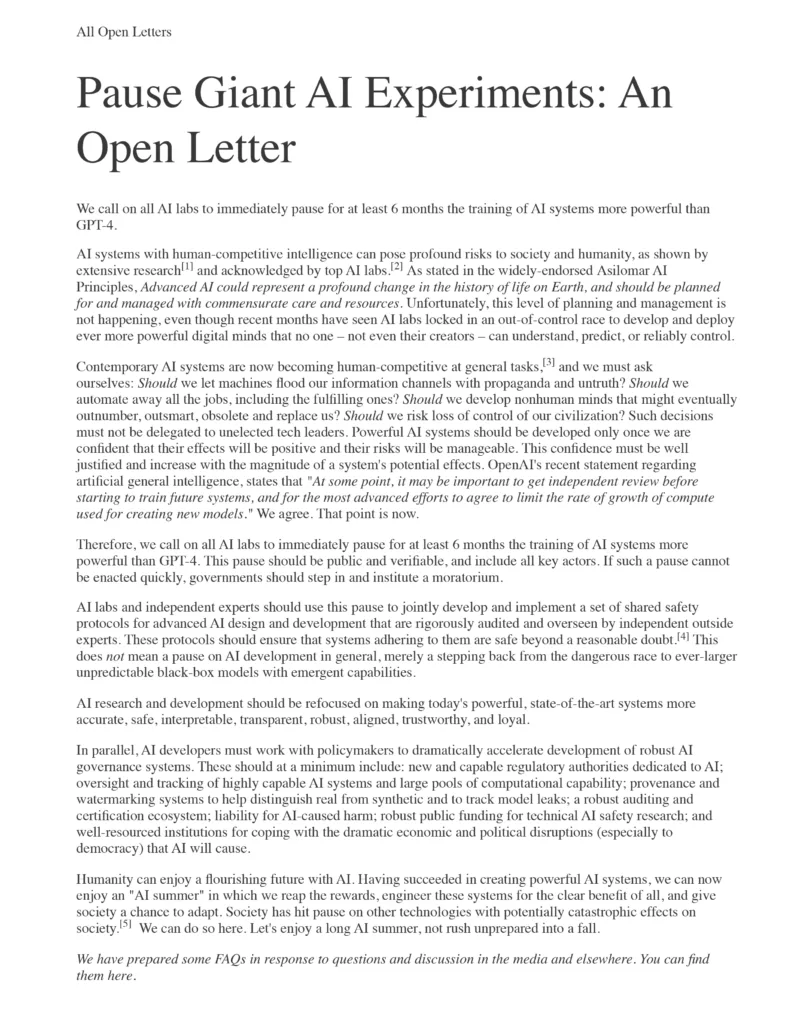

The open letter, issued by the Future of Life Institute and signed by Musk, Apple’s co-founder Steve Wozniak, and former 2020 presidential candidate Andrew Yang, implored AI labs to stop training models more powerful than OpenAI’s GPT-4, the latest iteration of the large language model software developed by the American startup.

“Current AI systems are approaching human competitiveness in general tasks, and we must consider: Should we allow machines to inundate our information streams with disinformation and propaganda? Should we replace all jobs, even rewarding ones, with automation? Should we create nonhuman minds that could eventually surpass, outpace, render us obsolete, and supplant us? Are we willing to risk losing control of our civilization?” the letter stated.

The letter further emphasized that “Such choices should not be entrusted to unelected tech executives.”

Based in Cambridge, Massachusetts, the Future of Life Institute is a nonprofit organization advocating for the responsible and ethical advancement of artificial intelligence. Founding members include MIT cosmologist Max Tegmark and Skype co-founder Jaan Tallinn.

In the past, the institute has secured commitments from Musk and Google’s DeepMind to abstain from developing lethal autonomous weapon systems.

The institute is now requesting that all AI labs “immediately halt training AI systems more powerful than GPT-4 for a minimum of six months.”

Launched earlier this month, GPT-4 is believed to be significantly more advanced than its predecessor, GPT-3.

The letter also suggested that “If such a pause cannot be swiftly implemented, governments should intervene and impose a moratorium.”

ChatGPT, the popular AI chatbot, has amazed researchers with its ability to generate human-like responses to user inputs. Within two months of its launch, ChatGPT had already garnered 100 million monthly active users, making it the fastest-growing consumer application in history.

Trained on vast quantities of internet data, the technology has been employed for tasks ranging from crafting poetry reminiscent of William Shakespeare to composing legal opinions on court cases.

However, AI ethicists have also voiced concerns over possible misuses of the technology, such as plagiarism and the spread of misinformation.

In the Future of Life Institute’s letter, technology leaders and scholars argue that AI systems with human-competitive intelligence pose “significant risks to society and humanity.”

The letter advocated that “AI research and development should concentrate on enhancing the accuracy, safety, interpretability, transparency, robustness, alignment, trustworthiness, and loyalty of today’s state-of-the-art systems.”

OpenAI, which is supported by Microsoft, has reportedly secured a $10 billion investment from the Redmond, Washington-based technology behemoth. Microsoft has also integrated OpenAI’s GPT natural language processing technology into its Bing search engine to enhance its conversational capabilities.

In response, Google announced its own consumer conversational AI product named Google Bard.

Musk has previously stated that he views AI as one of the “greatest threats” to civilization.

Musk co-founded OpenAI in 2015 alongside Sam Altman and others. However, he stepped down from OpenAI’s board in 2018 and no longer has an ownership stake in the company.

Musk has recently criticized the organization on several occasions, stating his belief that it is deviating from its original objectives.

As AI technology continues to advance at a rapid pace, regulators are also scrambling to keep up. On Wednesday, the UK government released a white paper on AI, which deferred to various regulators to oversee AI tools usage in their respective sectors by applying existing laws.

Original source: here

Original source: here